Kafka Offset

You can use the Kafka Offset job entry to reset the offset value of all partitions in a topic based upon a given timestamp or epoch value.

General

The following field is general to this job entry:

- Job Name: Specify the unique name of the Kafka Offset entry on the canvas. You can customize the name or leave it as the default.

Options

The Kafka Offset entry includes three tabs for the step. Each tab is described below.

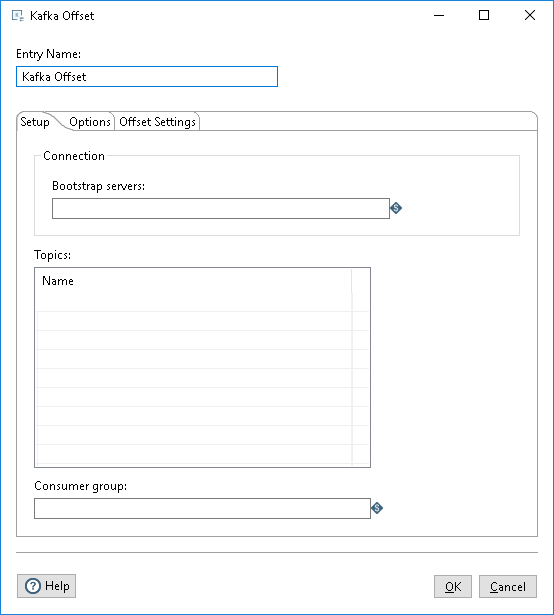

Setup tab

In this tab, define the connections used for receiving messages, topics to which you want to subscribe, and the consumer group for the topics.

| Option | Description |

| Bootstrap servers |

Specify the Bootstrap servers from which you want to receive the Kafka streaming data. |

| Topics | Enter the name of each Kafka topic from which you want to consume streaming data (messages). You must include all topics that you want to consume. |

| Consumer group |

Enter the name of the group of which you want this consumer to be a member. Each Kafka Consumer step will start a single thread for consuming. When part of a consumer group, each consumer is assigned a subset of the partitions from topics it has subscribed to, which locks those partitions. Each instance of a Kafka Consumer step will only run a single consumer thread. |

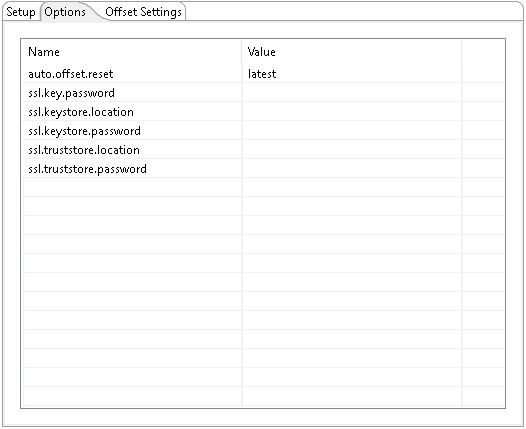

Options tab

Use this tab to send the parameters to the broker when connecting. The parameter values can be encrypted. A few of the most common options are included for your convenience. You can enter any desired Kafka property. For further information on these input names, see the Apache Kafka documentation site: https://kafka.apache.org/documentation/.

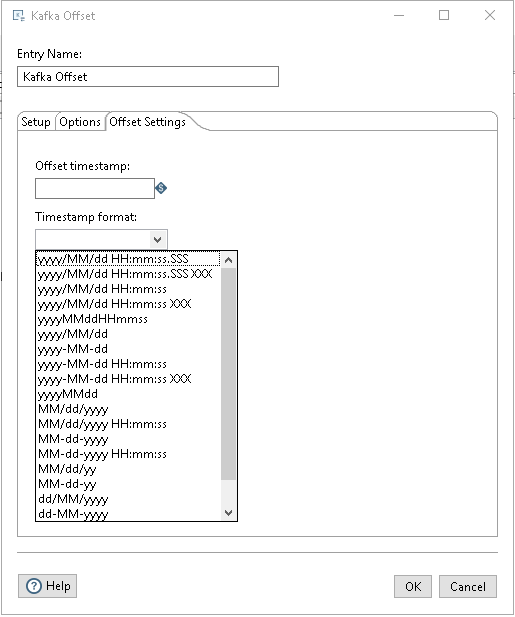

Offset Settings tab

To reset the offset value, set the start date in the Offset timestamp field and select the format in the Timestamp format field.

| Option | Description |

| Offset timestamp |

Specifies the start date timestamp to use as the offset. The timestamp can be a past or future time. When this job is run, the offset value in each partition will be reset to timestamp given. |

| Timestamp format |

Specifies the timestamps format for the offset. When epoch value is given, the timestamp format is not needed. |

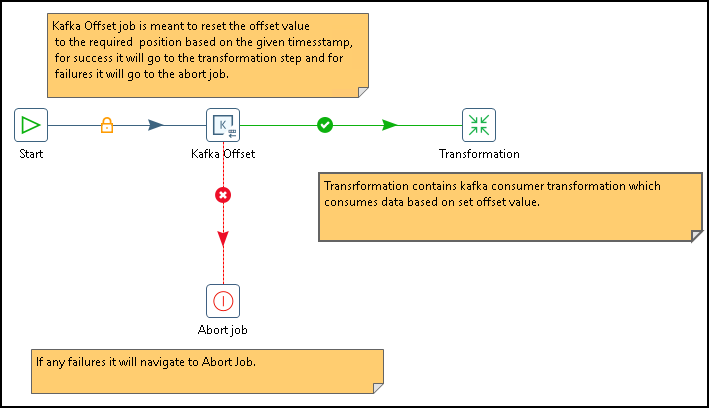

Examples

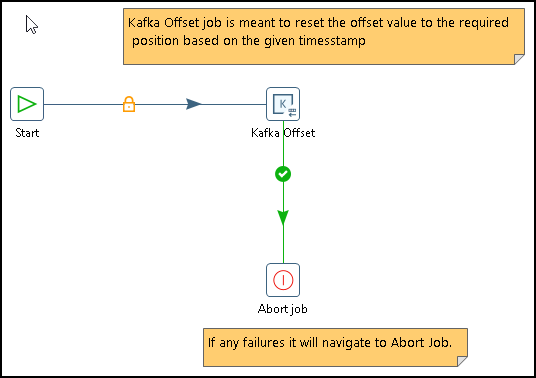

The kafka_job_abort.kjb sample job in the design-tools/data-integration/plugins/kafka-job-plugins-zip-9.5.1.0-110/kafka-offset-job/samples/transformations directory demonstrates the capabilities of this job using the Abort step.

The kafka_job.kjb sample job in the design-tools/data-integration/plugins/pentaho-streaming-kafka-plugin-zip-9.5.1.0-110/pentaho-streaming-kafka-plugin/samples/transformations directory demonstrates in the kafka_job.kjb job with both successes and failures. Comments in the transformations explain how the steps are used.