Kafka Producer

The Kafka Producer allows you to publish messages in near-real-time across worker nodes where multiple, subscribed members have access. A Kafka Producer step publishes a stream of records to one Kafka topic.

General

Enter the following information in the transformation step name field.

- Step name: Specifies the unique name of the transformation on the canvas. The Step Name is set to Kafka Producer by default.

Options

The Kafka Producer step features a Kafka connection setup tab and a configuration property options tab. Each tab is described below.

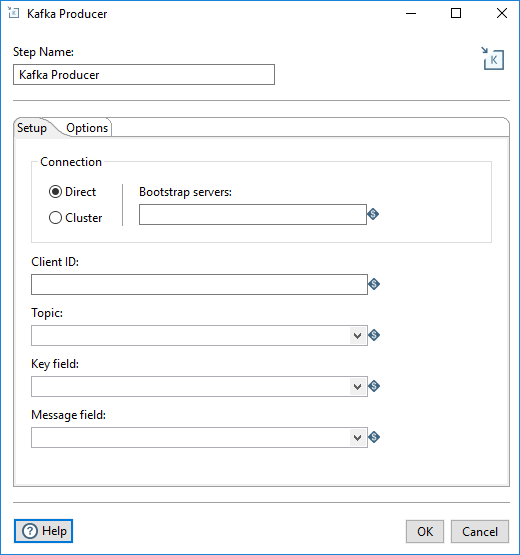

Setup tab

Fill in the following fields.

| Option | Description |

| Connection |

Select a connection type:

|

| Client ID | The unique Client identifier, used to identify and set up a durable connection path to the server to make requests and to distinguish between different clients. |

| Topic | The category to which records are published. |

| Key Field | In Kafka, all messages can be keyed, allowing for messages to be distributed to partitions based on their keys in a default routing scheme. If no key is present, messages are randomly distributed to partitions. |

| Message Field | The individual record contained in a topic. |

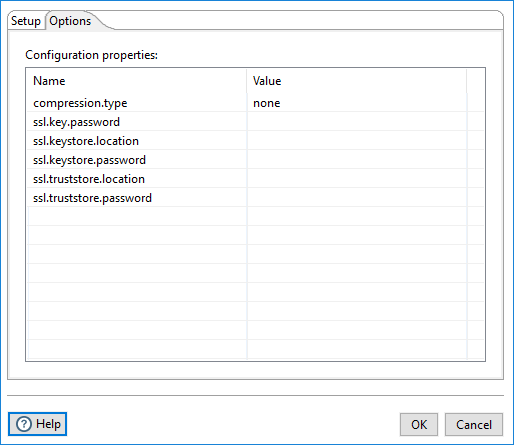

Options tab

Use this tab to configure the Kafka Producer broker sources. For further information on these input names, see the Apache Kafka documentation site: https://kafka.apache.org/documentation/.

Metadata injection support

All fields of this step support metadata injection. You can use this step with ETL metadata injection to pass metadata to your transformation at runtime.