Set up the Pentaho Server to connect to a Hadoop cluster

This article is for IT administrators who need to configure Pentaho to connect to a Hadoop cluster for teams working with Big Data.

Pentaho can connect to Amazon Elastic MapReduce (EMR), Azure HDInsight (HDI), Cloudera Distribution for Hadoop (CDH) and Cloudera Data Platform (CDP), Google Dataproc, and Hortonworks Data Platform (HDP). Pentaho also supports related services such as HDFS, HBase, Hive, Oozie, Pig, Sqoop, Yarn/MapReduce, ZooKeeper, and Spark. You can connect to clusters and services from these Pentaho components:

- PDI client (Spoon), along with Kitchen and Pan command line tools

- Pentaho Server

- Analyzer (PAZ)

- Pentaho Interactive Reports (PIR)

- Pentaho Report Designer (PRD)

- Pentaho Metadata Editor (PME)

You can configure the Pentaho Server to connect to a Hadoop cluster through a compatibility layer called a driver. Pentaho regularly develops and releases new drivers, so you can stay up-to-date with the latest technological developments. To view which drivers are supported for this version of Pentaho, see the Components Reference.

When drivers for new Hadoop versions are released, you can download them from the Hitachi Vantara Lumada and Pentaho Support Portal and then add them to Pentaho to connect to the new Hadoop distributions. For more information about downloading and adding a new driver, see Adding a new driver.

Before you can add a named connection to a cluster, you must install a driver for the vendor and version of the Hadoop cluster that you are connecting to.

To learn about additional configurations for a specific distribution, click one of the following links:

- Advanced settings for connecting to an Amazon EMR cluster

- Advanced settings for connecting to Azure HDInsight

- Advanced settings for connecting to a Cloudera cluster

- Advanced settings for connecting to Cloudera Data Platform

- Advanced settings for connecting to Google Dataproc

- Advanced settings for connecting to a Hortonworks cluster

Before you begin

Before you connect to the Pentaho Server, set the connection path to the metastore, which is where these types of connections are stored.

Procedure

Navigate to the pentaho-server/pentaho-solutions/system/kettle/plugins/pentaho-big-data-plugin directory and open the plugin.properties file with any text editor.

Locate the hadoop.configurations.path property and set the value to the metastore directory. For example, /home/devuser/.pentaho/metastore.

Save and close the plugin.properties file.

Install a driver for the Pentaho Server

Before you begin

Procedure

Verify that you are connected to a repository.

In the PDI client, select the View tab of your transformation or job.

Right-click the Hadoop clusters folder and click Add driver.

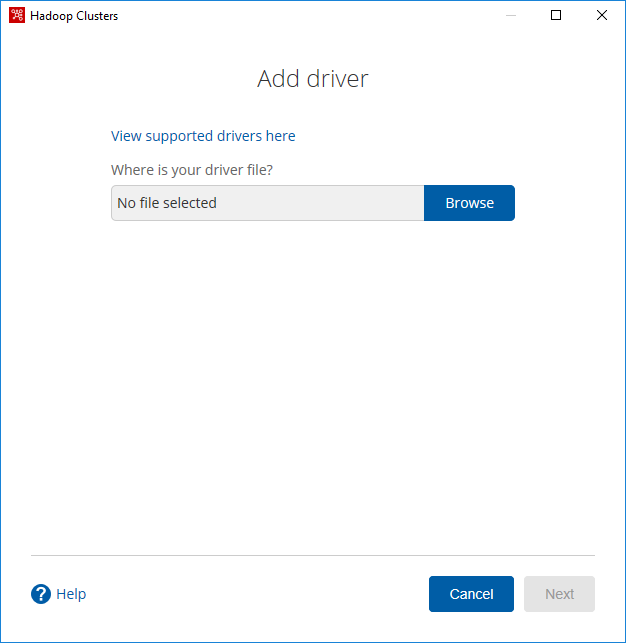

The Add driver dialog box appears.

Click Browse

The Choose File to Upload dialog box appears.Navigate to the directory where you downloaded your .kar file from the Lumada and Pentaho Support Portal.

Select the driver (.kar file) you want to add, click Open, and then click Next.

The selected file name appears in the Browse text field. The vendor distribution files contain their abbreviations in the .kar file names as shown below:- Amazon EMR (emr)

- Azure HDInsight (hdi)

- Cloudera (cdh)

- Cloudera Data Platform (cdp)

- Google Dataproc (dataproc)

- Hortonworks (hdp)

Click Next.

The Congratulations dialog box appears, notifying you that you must restart the Pentaho Server and the PDI client. The installed driver is now available for selection in the Driver field in the New cluster and Import cluster dialog boxes.

Manually install a driver for the Pentaho Server

Perform the following steps to manually install a driver for the Pentaho Server :

Procedure

Navigate to the directory where you downloaded your .kar file from the Lumada and Pentaho Support Portal.

Select the driver (.kar file) you want to add and copy it to the <pentaho home>/server/pentaho-server/pentaho-solutions/drivers directory on the machine with the Pentaho Server.

The vendor distribution files contain their abbreviations in the .kar file names as shown below:- Amazon EMR (emr)

- Azure HDInsight (hdi)

- Cloudera (cdh)

- Cloudera Data Platform (cdp)

- Google Dataproc (dataproc)

- Hortonworks (hdp)

Restart the Pentaho Server.