Using the ORC Input step on the Spark engine

You can set up the ORC Input step to run on the Spark engine. Spark processes null values differently than the Pentaho engine, so you may need to adjust your transformation to successfully process null values according to Spark's processing rules.

Because of Cloudera Distribution Spark (CDS) limitations, the step does not support AEL for reading Hive tables containing data files in the ORC format from Spark applications in YARN mode. As an alternative, you can use the Parquet data format for columnar data using Impala.

Options

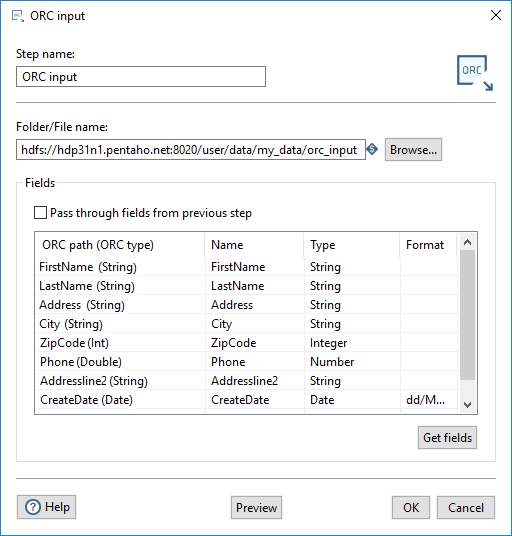

Enter the following information in the ORC Input step fields:

| Field | Description |

| Step name | Specify the unique name of the ORC Input step on the canvas. You can customize the name or use the provided default. |

| Folder/File Name | Specify the fully qualified URL of the source file or folder name for the input fields. Click Browse to display the Open File window and navigate to the file or folder. For the supported file system types, see Connecting to Virtual File Systems. The Spark engine reads all the ORC files in a specified folder as inputs. |

Fields

The Fields section contains the following items:

- A Pass through fields from the previous step option that allows you to read the fields from the input file without redefining any of the fields.

- A table defining data about the columns to read from the ORC file.

The table in the Fields section defines the fields to read as input from the ORC file, the associated PDI field name, and the data type of the field. Enter the information for the ORC Input step fields as shown in the following table:

| Field | Description |

| ORC path (ORC type) | Specify the name of the field as it will appear in the ORC data file or files, and the ORC data type. |

| Name | Specify the name of the input field. |

| Type | Specify the data type of the input field. |

| Format | Specify the date format when the Type specified is Date. |

You can define the fields manually, or you can provide a path to an ORC data file and click Get Fields to populate all the fields. When the fields are retrieved, the ORC type is converted into an appropriate PDI type. You can preview the data in the ORC file by clicking Preview. You can change the PDI type by using the Type drop-down or by entering the type manually.

AEL types

In AEL, the ORC step automatically converts ORC rows to Spark SQL rows. The following table lists the conversion types.

| ORC Type | Spark SQL Type |

| Boolean | Boolean |

| TinyInt | Short |

| SmallInt | Short |

| Integer | Integer |

| BigInt | Long |

| Binary | Binary |

| Float | Float |

| Double | Double |

| Decimal | BigNumber |

| Char | String |

| VarChar | String |

| Timestamp | Timestamp |

| Date | Date |

Metadata injection support

All fields of this step support metadata injection. You can use this step with ETL metadata injection to pass metadata to your transformation at runtime.