Cassandra Input

The Cassandra Input step reads in data from a table (column family) of an Apache Cassandra database using CQL (Cassandra Query Language) version 3.x.

AEL considerations

When using the Cassandra Input step with the Adaptive Execution Layer, the following factor affects performance and results:

- Spark processes null values differently than the Pentaho engine. You will need to adjust your transformation to successfully process null values according to Spark's processing rules.

Options

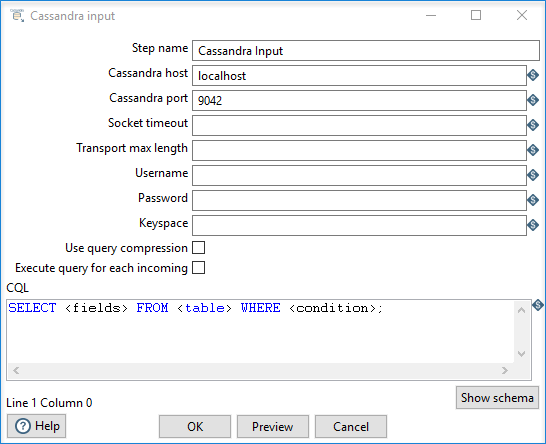

The following options are available for the Cassandra Input transformation step:

| Option | Description |

| Step name | Specify the unique name of the Cassandra Input step on the canvas. You can customize the name or leave it as the default. |

| Cassandra host | Specify the host name for the connection to the Cassandra server. |

| Cassandra port | Specify the port number for the connection to the Cassandra server. |

| Socket timeout | Set an optional connection timeout period, specified in milliseconds. |

| Transport max length | Set an optional maximum object size that can be sent. |

| Username | Specify the username of the target keyspace and/or table authentication details. |

| Password | Specify the password of the target keyspace and/or table authentication details. |

| Keyspace | Specify the keyspace (database) name. |

| Use query compression | Select if you want to compress the text of the CQL query before sending it to the server. |

| Execute query for each incoming row | Select to execute a query for each input row. |

| Show schema | Click to open a dialog box that shows metadata for the table named in the CQL SELECT query. |

| Preview | Click to display the rows generated by this step. |

CQL SELECT query

Cassandra is a sparse column oriented database similar to HBase. It is possible for rows to contain varying numbers of columns which might or might not be defined in the metadata for the table (column family). The Cassandra Input step can emit columns that are not defined in the metadata for the table in question if they are explicitly named in the SELECT clause. Cassandra Input uses type information present in the metadata for a table. This, at a minimum, includes a default type (column validator) for the table. If there is explicit metadata for individual columns available, then this is used for type information, otherwise the default validator is used.

name1..nameN, for specifying columns in a SELECT query.You can enter your CQL SELECT statement for querying the table in the large text box at the bottom of the dialog box. Only a single SELECT query is accepted by the step. The following example query shows the possible format of the statement:

SELECT [FIRST N] [REVERSED] <SELECT EXPR> FROM <TABLE> [USING <CONSISTENCY>] [WHERE <CLAUSE>] [LIMIT N];

SELECT queries may name columns explicitly (in a comma separated list) or use the * wildcard. If you use the * wildcard, then only those columns defined in the metadata for the table in question are returned. If columns are selected explicitly, then the name of each column must be enclosed in single quotation marks.

The following table describes the elements of the CQL SELECT statement:

| Element | Description |

FIRST N |

Returns the first N (where N is determined by the column sorting strategy used for the table in question) column values from each row. If the table in question is sparse then it may result in a different N (or less) column values appearing from one row to the next. Because PDI deals with a constant number of fields between steps in a transformation, Cassandra rows that do not contain particular columns are output as rows with null field values for non-existent columns. Cassandra's default for FIRST (if omitted from the query) is 10,000 columns. If a query is expected to return more than 10,000 columns, then an explicit FIRST must be added to the query. |

REVERSED |

Reverses the sort order of the columns returned by Cassandra for each row. It may affect which values result from a FIRST N option, but does not affect the order of the columns output by Cassandra Input. |

WHERE clause |

Filters the rows that appear in results. The clause can filter on any of the following factors:

Key filters are specified using the |

LIMIT |

Limits the number of rows returned. If the query is expected to return more than 10,000 rows, an explicit LIMIT clause must be added to the query. If omitted, Cassandra assumes a default limit of 10,000 rows to be returned by the query. |

WHERE Clause

When terms appear on both sides of a relational operator, the filter is applied to an indexed column. With column index filters, the term on the left of the operator is the name, and the term on the right is the value to filter on. When filtering on indexed columns, at least one equality operator must be present. Using inequality operators will result in ranges that are inclusive of the terms (for example, > is the same as >=, and < is the same as <=).

A WHERE clause may be used to filter rows that appear in the results.

SELECT ... WHERE KEY = keyname AND name1 = value1

SELECT ... WHERE KEY >= startkey and KEY =< endkey AND name1 = value1

SELECT ... WHERE KEY IN ('<key>', '<key>', '<key>', ...)

Metadata injection support

All fields of this step support metadata injection. You can use this step with ETL metadata injection to pass metadata to your transformation at runtime.